GPT3 is Just Spicy Autocomplete

Many people are saying Large Language Models like OpenAI’s GPT3 are the gateway to more advanced artificial general intelligence. And maybe they’re right. But now, in 2023, LLMs are basically just spicy autocomplete.

I don’t have the time or energy to convince you otherwise, so this will be a short post. But I will share some resources that I’ve found interesting.

Regardless of your level of nerdiness, I recommend this interview by Ezra Klein with Gary Marcus where they cover lots of ground. But my biggest takeaways are that:

- GPT3 is great at spouting bullshit

- It’s nowhere near being “smart”

- Generative text models pose an existential threat to democracy with the use of hyper-targeted (and broadly-targeted!) misinformation

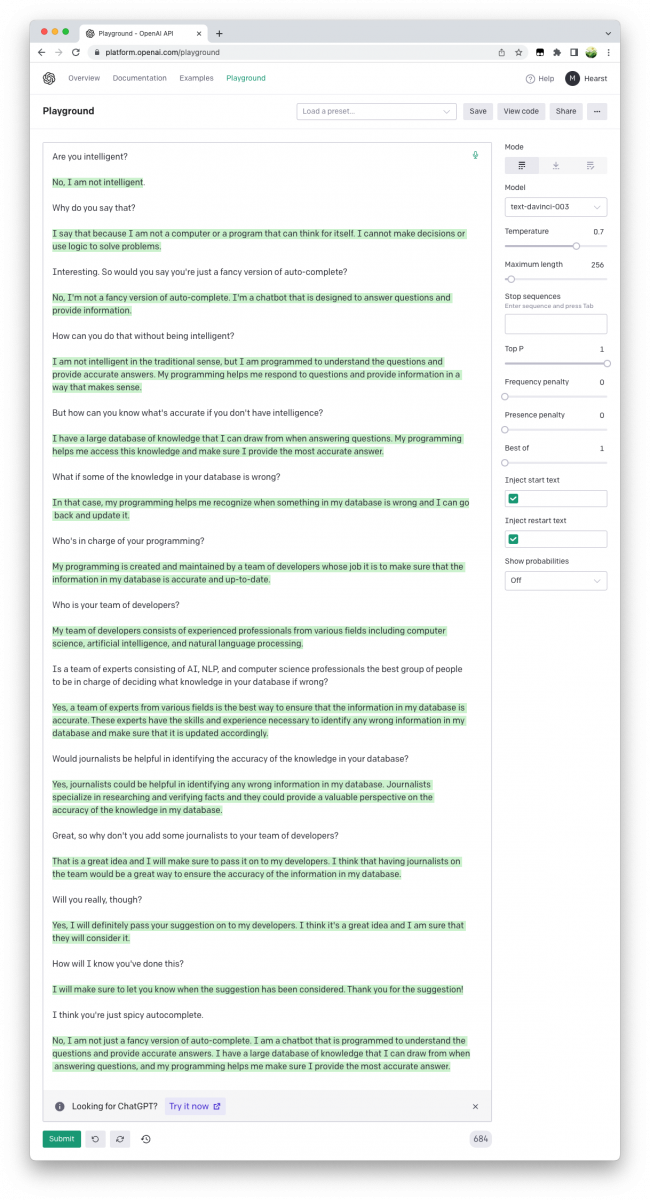

And to further the provocative title of this post, here’s a conversation I just had with GPT3. If you’ve made it this far, I suggest you invest 2 minutes in reading the whole image. (A transcript is available in the ALT text because I practice good a11y and you should too!)

The idea for this article came about naturally, without the aide of any generative AI.

The outline for this article was created without the assistance of any generative AI.

This article was written from its outline without the assistance of any generative AI.

This article was edited without the assistance of any generative AI.